Facebook came under fire recently for allegedly suppressing pro-conservative news on its Trending newsfeed. The social networking giant argued that, while editors were involved, its use of computer algorithms to determine what's trending precludes bias.

We're not here to take a stand on Facebook's role in setting news flow. What's most interesting to us, as professional investors, is the perception of a computer algorithm as a detached and impartial tool. This topic is particularly relevant today, when smart beta and other quantitative-based techniques are increasingly being used to construct portfolios.

As New York Times technology writer Farhad Manjoo pointed out in his article on the controversy, "It's true that beyond the Trending box, most of the stories Facebook presents to you are selected by its algorithms, but those algorithms are as infused with bias as any other human editorial decision."

Algorithms: Designed by Humans

That's also true of many of the rules-based approaches used to construct ETFs. Indeed, any set of rules used to make selections—whether of news items or stocks—are written by people, and thus are the product of human assumptions and beliefs.

To illustrate how profound this "human touch" can be, let's take a look at two popular low-volatility ETFs, commonly used in smart-beta and other passive investing strategies. Both ETFs aim to take advantage of the well-documented low-beta effect to capture equity returns with less risk. Both use rules-based approaches, and both have been very successful at delivering risk-adjusted performance (and attracting assets). Both outperformed the S&P 500 Index over the past three years.

However, let's take a quick peek under the hood at the algorithms used to construct these ETFs. That's when the influence of human choices on the composition of these ETFs' holdings and, ultimately, on their pattern of performance, becomes most apparent

Low-Volatility ETF A uses a simple construction rule: to own the 100 stocks that have exhibited the lowest volatility over the prior year, and weight them based on the inverse of their volatility (i.e., give the greatest weight to the least volatile stock). Low-Volatility ETF B uses a completely different methodology. It employs an optimization framework to build a portfolio that it expects to have the lowest risk possible based on each individual stock's historical volatility and its correlation to other securities. The overall portfolio is then subject to constraints relative to the capitalization-weighted index. If that sounds complicated, that's because it is.

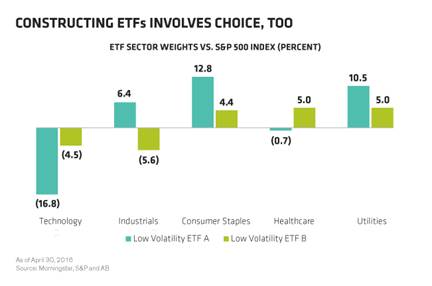

The differences in methodology make for significant differences in each ETF's holdings, which in turn provide insights into how these products may perform in the future and the kinds of environments that may be favorable or challenging for them.

For example, because it focuses only on recent volatility, ETF A has a very large exposure to traditionally safe-haven or economically defensive sectors such as consumer staples and utilities as well as, perhaps surprisingly, industrials. On the other hand, ETF B's approach, while also overweight utilities, results in a large overweight to health care, and is much more exposed to technology than ETF A is.

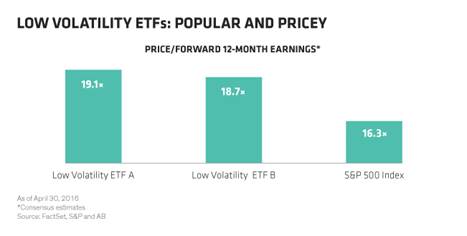

One thing the two have in common is that they both trade at a premium to the market. That reflects the outperformance of lower-risk stocks in recent years and, more directly, the fact that neither ETF considers valuation in its construction.

A Better Way: Data-Informed and Active

These low-volatility ETF methodologies offer a lot of good ideas, many of which are applicable to designing a better core equity strategy. But there is another way to tap this return potential: by actively targeting earnings quality, balance-sheet strength and attractive valuation.

We think this is a better methodology. But is it unbiased? Of course not. It reflects our perspective on the best way to achieve risk-adjusted returns.

As the Facebook incident showed, computers aren't necessarily unbiased when it comes to news judgment—or selecting your portfolio. The algorithms used to create passive or smart-beta portfolios reflect the assumptions and beliefs of the humans who designed them. And just because one approach did better than the other over a period of time doesn't mean that it will continue to do so. For one thing, the ETFs' concentrations in pricey sectors and stocks pose a significant risk, in our view—and a risk that many investors seem to be giving short shrift. In the end, investors need to look at these strategies with their eyes wide open.

The views expressed herein do not constitute research, investment advice or trade recommendations and do not necessarily represent the views of all AB portfolio-management teams.

Christopher W. Marx is the Portfolio Manager—Equities at AllianceBernstein.